Article-level impact for web native scholarship

Scott Chamberlain

There are a lot of papers out there

and discover the impactful papers

We rank journals using the JIF

JIF is not open

single for-profit w/o transparency

Measured at the journal level

Articles within journals vary in impact

[1]: Seglen, P. O. (1992), The skewness of science. J. Am. Soc. Inf. Sci., 43: 628–638.

We judge papers, people, schools on IF's

Frustration with the JIF

- 9,596 signatories

- Don't use JIF

- Value all research outputs

Scholarly activities increasingly moving to the web

Journals are more or less all online

- Many companies collecting metrics on internet use

- We love to share things on the web

- CV's are online

- Etc., etc.

We can listen to these conversations

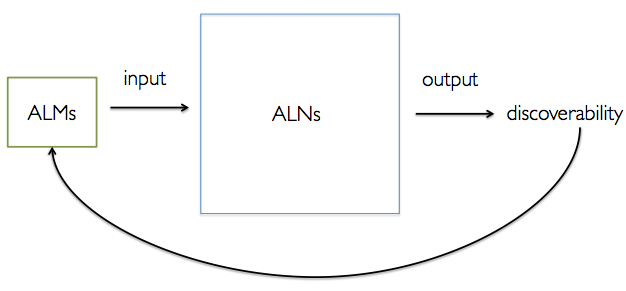

Altmetrics: new ways of measuring impact

Article-level metrics: altmetrics on a scholarly paper

Measured on article level

Measured at article object level

ImpactStory just got NSF grant to track software reuse/impact

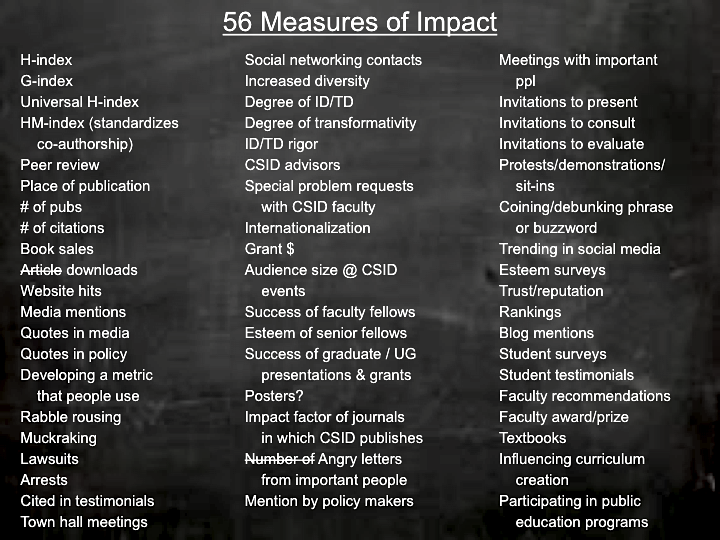

Altmetrics measure diverse impacts

- Usage: html, xml, pdf downloads

- Citations: Scopus, PubMed Central, Crossref

- Social media: Twitter, Mendeley, Facebook, etc.

Altmetrics measure scholarly vs. public impact

- Scholarly: Citations, shares by scholars, etc.

- Public: News articles, shares by non-scholars, etc.

Diverse set of providers

Which...

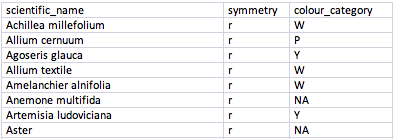

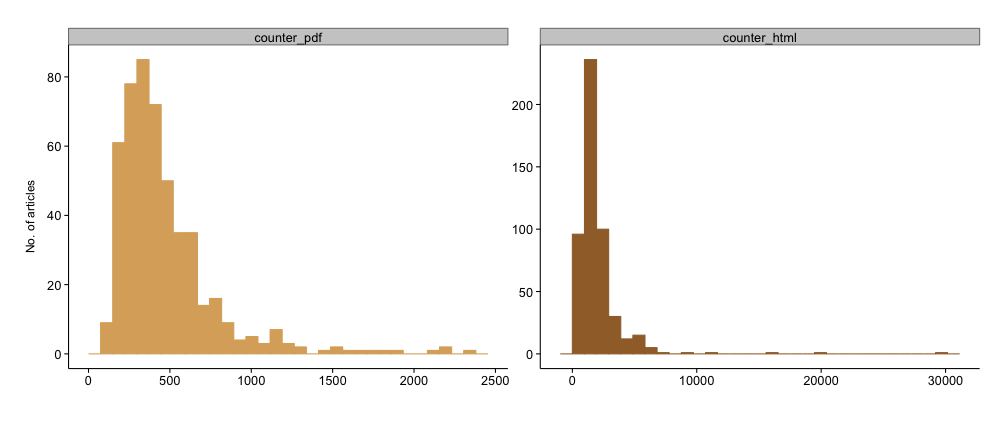

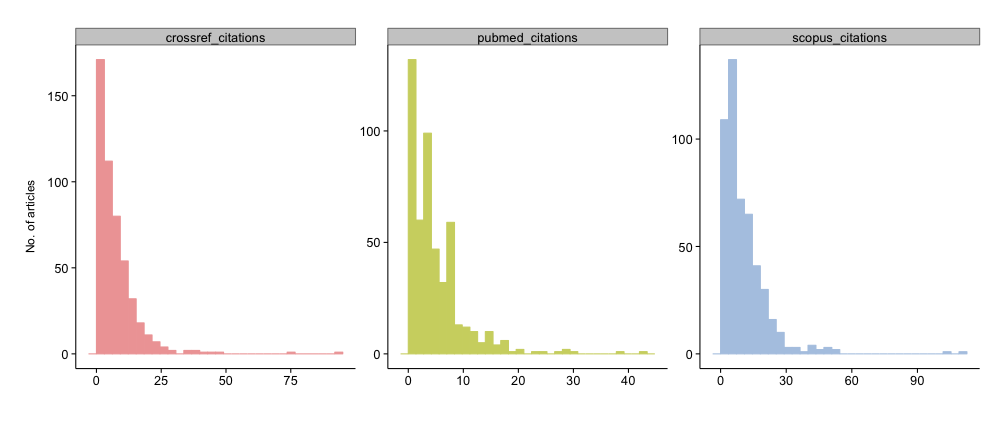

using a set of 500 papers from PLOS One published in 2010

Usage

- html, xml, pdf downloads, collected by publishers

plot_density(outdf, source = c("counter_pdf", "counter_html"), color = c("#DBAC6A",

"#A06D34"), plot_type = "histogram")

Citation

- Scopus, PubMed Central, Crossref

plot_density(outdf, source = c("crossref_citations", "pubmed_citations", "scopus_citations"),

color = c("#EFA5A5", "#CFD470", "#B2C9E4"), plot_type = "histogram")

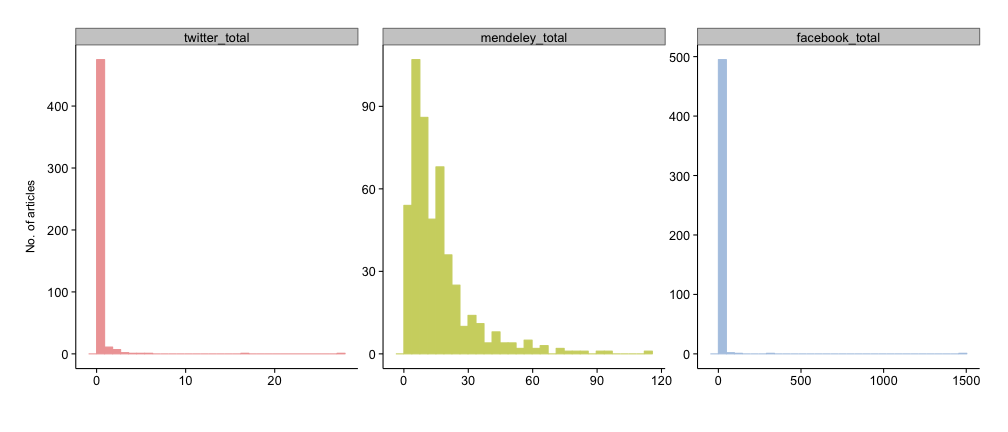

Social media

- Twitter, Mendeley, Facebook, etc., collected by altmetrics aggregators

plot_density(outdf, source = c("twitter_total", "mendeley_total", "facebook_total"),

color = c("#EFA5A5", "#CFD470", "#B2C9E4"), plot_type = "histogram")

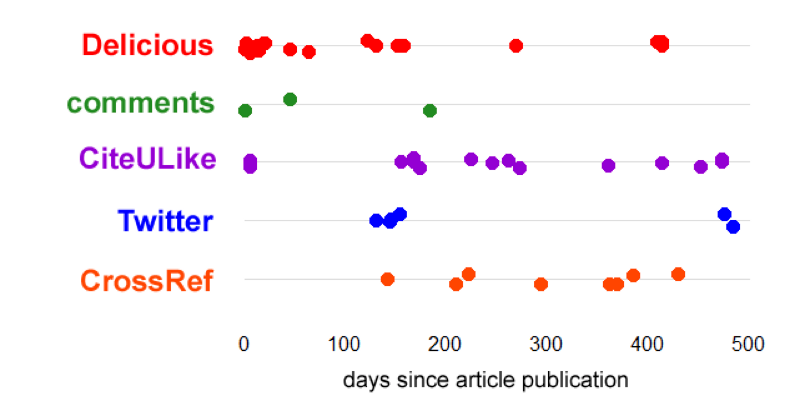

Accumulation through time

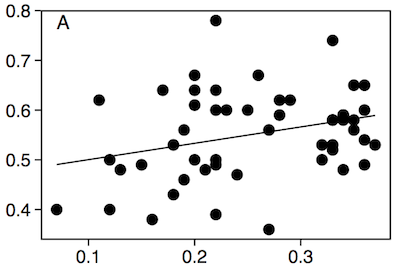

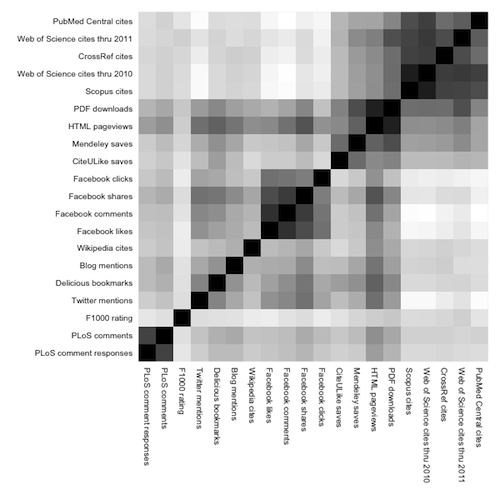

Correlation

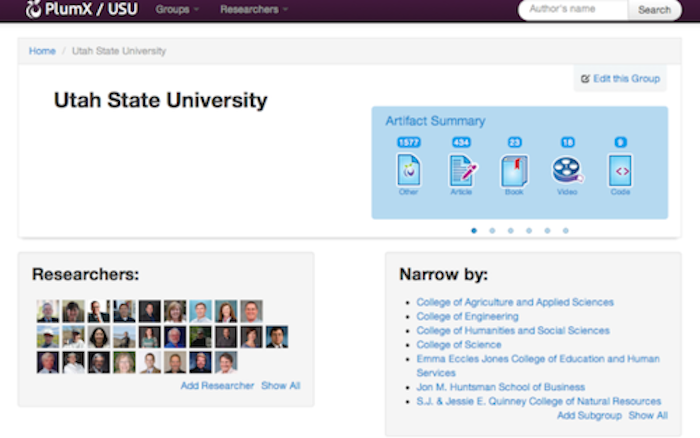

Plum Analytics

- Institutional subscribers w/ altmetrics dashboards for their faculty/staff

- Don't know if they evaluate them on these though?

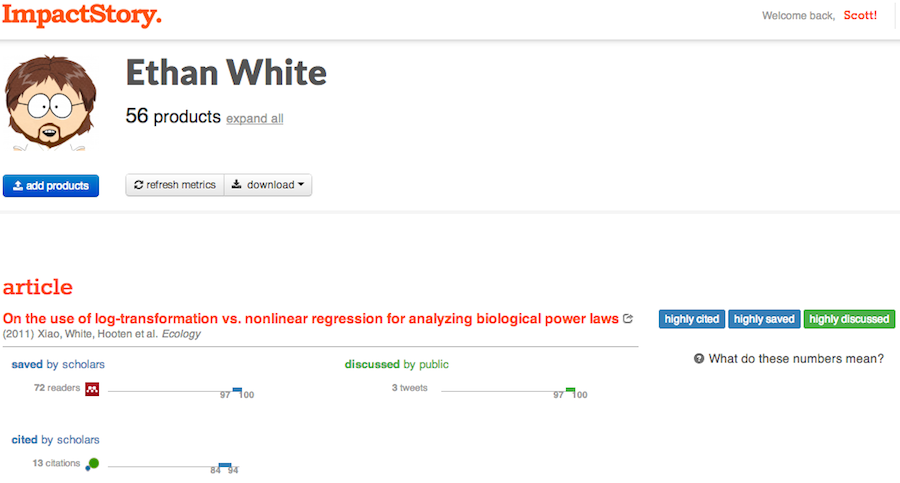

ImpactStory

- Pushing their profiles as the new CV

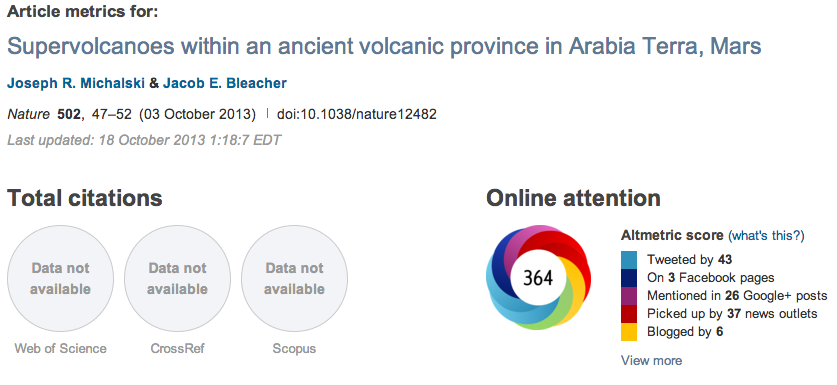

Altmetric.com

- Publishers display metrics

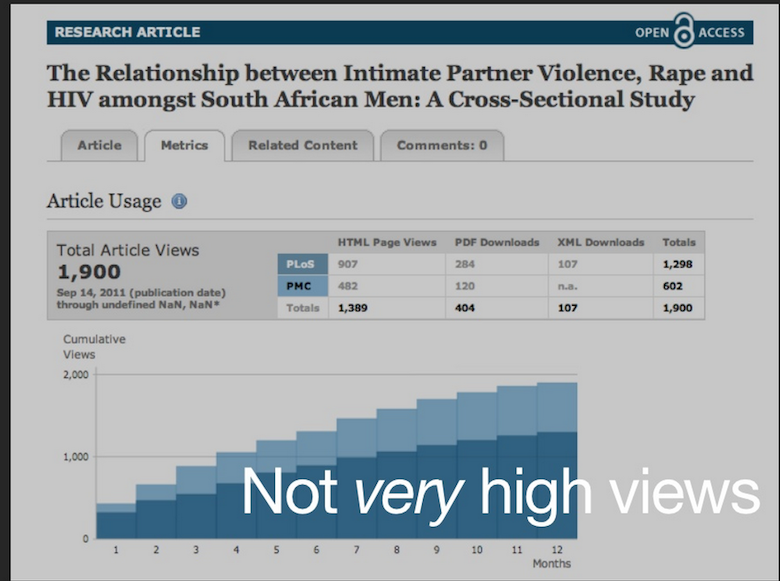

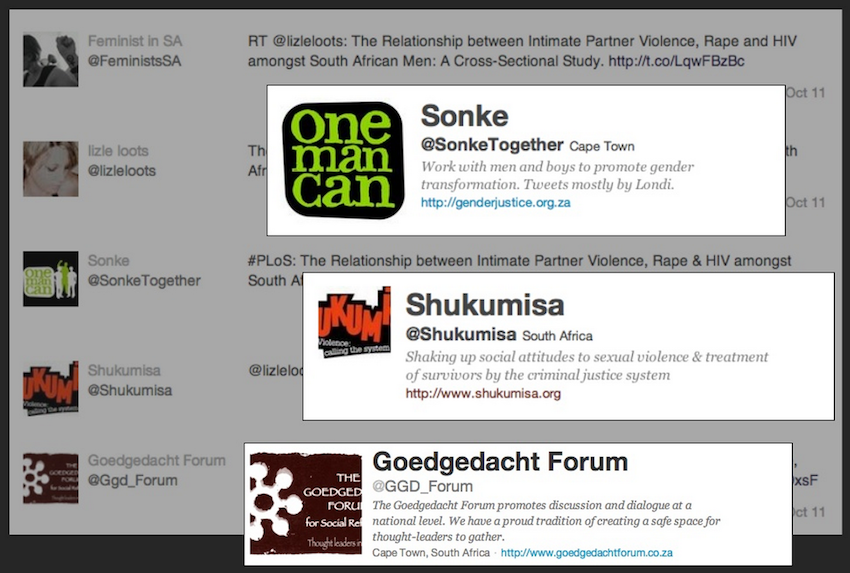

A story about real impact

From this talk by Cameron Neylon

Article in w/o strikingly large page views

But with highly relevant interactions

Anecdotes

- Academics reporting altmetrics on their CV's and say that it may have helped them get tenure

- Stories of use in hiring/tenure decisions - but no hard data (e.g., this)

- Some have used altmetrics on their application to be an editor for PeerJ

- Wellcome Trust has been thinking about using for evaluations

- Amy Brand @ Harvard on evaluations - don't look at journal name; do look at citations - other products "leaking in"

- SSRN - uses downloads as a metric for relative impact/use

- Wikipedia mentions can be particularly interesting - a textbook on the web

Many OA journals don't select for impact

Quickly accumulating altmetrics can help filter articles after publication

7 of 10 most popular articles in 2012 as measured by altmetrics from Altmetric.com were open access - none were form Science/Nature - majority of chatter about them from non-scientists - wouldn't have been apparent from citations

[1]: Ross Mounce 2013, BAIST

Filtering using altmetrics

- Largely unrealized - especially useful in mega OA journals

- Jevin West

Data via alm interface to PLOS ALM

library(alm)

alm(doi="10.1371/journal.pone.0029797")

Slot "summary":

views shares bookmarks citations

1 29229 237 51 7

Slot "data":

.id pdf html shares groups comments likes citations total

1 bloglines NA NA NA NA NA NA 0 0

2 citeulike NA NA 1 NA NA NA NA 1

3 connotea NA NA NA NA NA NA 0 0

4 crossref NA NA NA NA NA NA 7 7

5 nature NA NA NA NA NA NA 4 4

...

What I'm worried about

- Data consistency: aggregators don't collect data in the same way, e.g., Twitter => ImpactStory (Topsy), Altmetric (Twitter API), PlumAnalytics (Topsy), PLOS ALM (??)

- Data provenance: aggregators tracking this, but newcomers may not - some provenance we'll never know

- Long-term sustainability: Web resources are ephemeral - how do we ensure data persistence?

- Openness:

- open data

- transparent calculations

¿Standards for almetrics?

- NISO to the rescue!!!

- $207K grant from Sloan Foundation

- Standards

- Granularity or data collected

- Duration of metrics

- Role of social media in altmetrics

- Data sharing

Resources

- Follow the #altmetrics hashtag on Twitter, Google+

- Blogs of PLOS ALM, Altmetric.com, ImpactStory, and PlumAnalytics

- Altmetrics.org

- R packages for programmatic access to altmetrics data